Design Priorities, Constraints and Readiness

Executive Summary

- AI data centers are not simply conventional facilities with more servers. They place materially heavier demands on power delivery, heat rejection, network fabric, controls, and phased expansion planning.

- The first bottlenecks often appear outside the server hall itself: utility power availability, electrical pathway quality, cooling topology, mechanical plant capacity, and the ability to scale without weakening resilience.

- Liquid cooling is becoming increasingly important for higher-density environments, but it is not a stand-alone answer. It affects plant design, hydraulic strategy, controls, maintainability, and heat-rejection architecture.

- AI readiness should be validated through engineering analysis rather than assumed from rack space alone. Thermal modeling, electrical studies, network assessment, coordination review, and staged capacity analysis are central to a credible decision.

- For many projects, the real decision is not simply whether a site can “host AI,” but whether retrofit, selective upgrade, modular expansion, or new-build is the most defensible route.

- For owners, operators, developers, and investors, the quality of front-end engineering and technical due diligence often determines whether an AI data center project remains buildable, financeable, and operationally stable.

- The core challenge for data centers lies in meeting the ever-increasing demand for processing power and storage needed for AI applications. AI workloads require vast amounts of computing power, often reaching exascale levels, to process complex models and algorithms. This exponential growth in demand for computational resources and storage capacity is driving significant transformation and innovation in the data center industry.

AI workloads are changing data center requirements at infrastructure level, not just at IT level. Dense GPU environments, accelerated compute, low-latency interconnects, and sustained training or inference loads are forcing a rethink of power architecture, cooling strategy, control systems, and expansion logic.

For technical and commercial decision-makers, the discussion has moved beyond how many racks a building can physically hold. The more relevant questions are whether the facility can absorb higher densities, whether the utility and mechanical systems can support the intended growth path, whether the resilience model still holds under new operating conditions, and whether the chosen route is viable in the local regulatory and infrastructure context.

This article is written for readers assessing AI infrastructure from a facility, project, or investment perspective. It does not focus on chip selection or model architecture. It focuses on what AI means for the physical and operational infrastructure that must support those workloads.

It is written from the perspective of engineering feasibility, infrastructure risk, and delivery practicality, and reflects the context of Azura’s data center design, Tier-aligned infrastructure capability, technical due diligence, and multi-disciplinary engineering services.

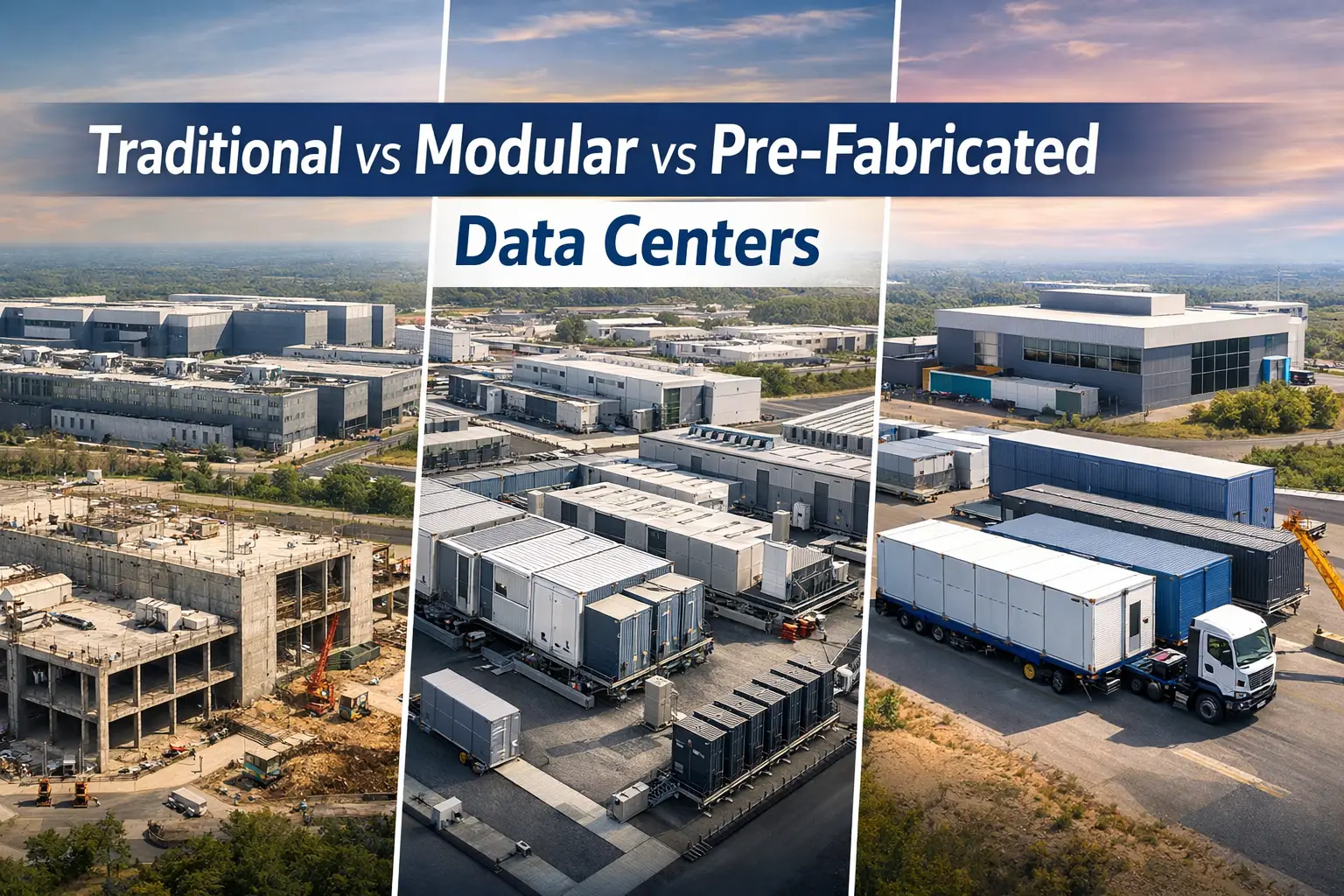

So What Is An AI Data Center?

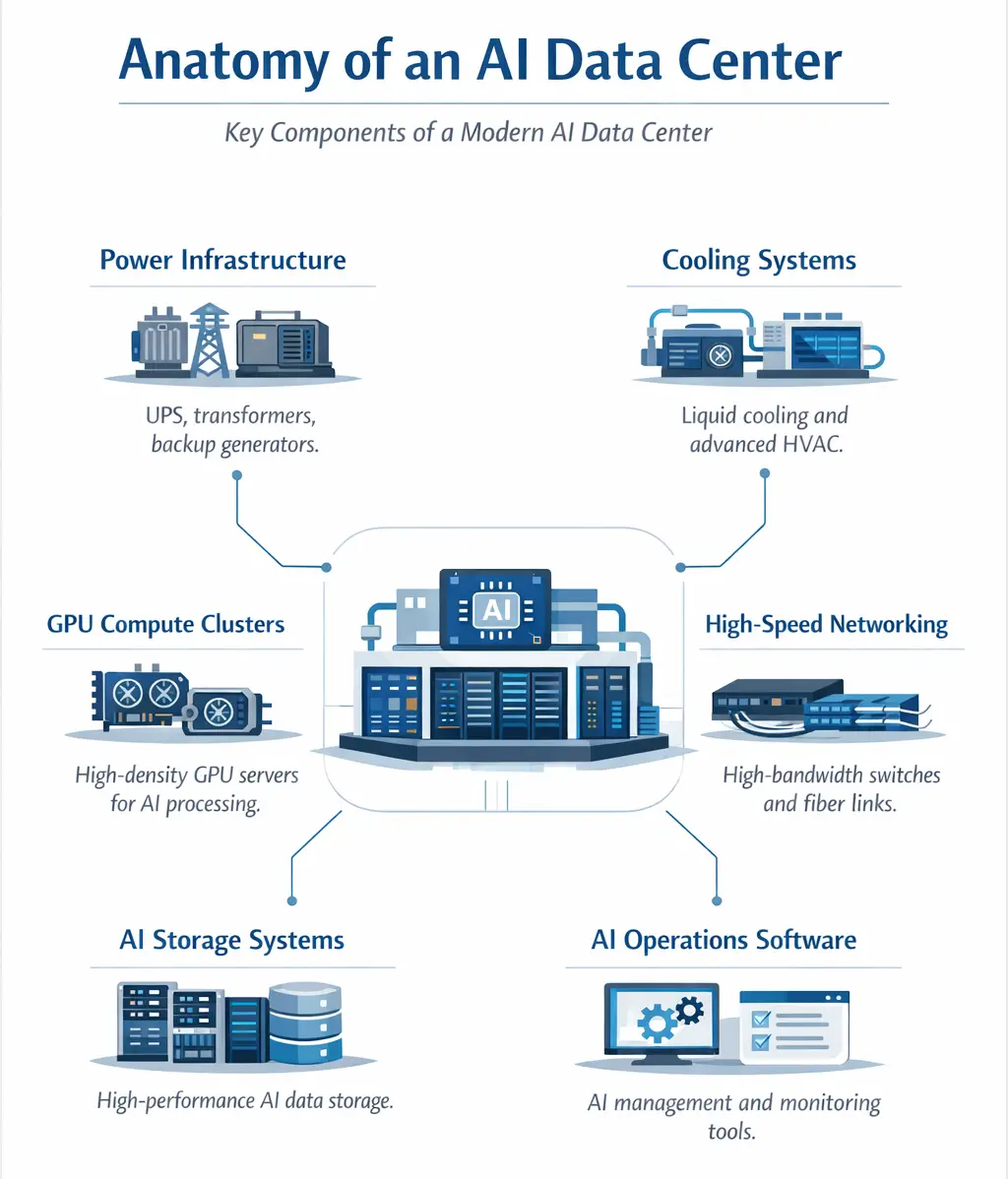

An AI data center is a data center designed or adapted to support high-density AI workloads, typically involving accelerated compute, high-bandwidth networking, and more demanding power and thermal conditions than conventional enterprise compute environments.

In practice, it is defined less by the presence of GPUs alone and more by whether its electrical, mechanical, network, controls, and resilience architecture can support those workloads safely, efficiently, and at scale.

At a Glance

- Best-fit design depends on workload profile, density target, resilience requirement, and utility context.

- Power and cooling constraints usually determine delivery strategy before white-space capacity does.

- Retrofit is sometimes viable, but only where structural, electrical, and mechanical headroom genuinely exists.

- Liquid cooling improves the feasibility of higher densities, but it also introduces plant, controls, and maintenance implications.

- AI readiness is a systems question, not a feature list.

- Azura Consultancy’s relevant capabilities include feasibility studies, site selection, critical MEP design, CFD analysis, electrical power-system analysis, reliability studies, BIM coordination, certification support, network infrastructure design, and technical due diligence.

What Makes an AI Data Center Different

A conventional data center can be highly reliable and still be poorly suited to serious AI deployment. The issue is not simply server type. AI workloads change the relationship between compute density, network throughput, cooling response, rack-level power delivery, and future expansion planning.

That changes the engineering problem. The facility team is no longer designing mainly for aggregate IT load. It is designing for localized density, sustained heat rejection, more demanding east-west traffic, tighter control margins, and a narrower tolerance for subsystem weakness.

In practical terms, AI data centers tend to place more emphasis on:

- higher rack and row heat loads

- lower tolerance for network bottlenecks

- more critical power-quality and distribution planning

- tighter integration between facility engineering and IT deployment

- more detailed phasing logic for growth

That is why generic “AI-ready” positioning is often not enough. A facility needs demonstrable capacity, validated assumptions, and a credible upgrade path.

The Constraints That Usually Appear First

In most AI data center projects, the first limiting factor is not floor space. It is one or more upstream constraints that determine whether higher-density deployment is technically and commercially viable.

These constraints often emerge early because AI infrastructure intensifies load concentration. A site may look attractive in broad capacity terms but still fail under closer scrutiny if power delivery timing, cooling topology, or resilience assumptions do not align with the intended deployment.

The most common constraint areas are:

- Grid access and power timing: the site may be promising in principle but commercially unusable if utility delivery does not align with the deployment plan

- Mechanical headroom: chilled water, condenser water, dry cooling, or hybrid plant may not support the target density without major redesign

- Resilience implications: density increases can weaken the intended fault-tolerance or maintainability model if electrical and mechanical paths are not revalidated

- Water and heat rejection: climate, local water conditions, and heat-rejection strategy can make one cooling architecture more viable than another

- Network topology: AI environments place high value on low-latency, high-bandwidth connectivity inside the facility and, in some cases, across campuses or external interconnects

- Permitting and sustainability expectations: these can materially affect delivery risk, stakeholder acceptance, and operating constraints

For many owners and investors, this is the point at which the conversation becomes more commercial than conceptual. A technically possible scheme may still be unattractive if the upgrade path is too slow, too disruptive, or too uncertain, which is why technical due diligence for investors is often needed before major commitments are made.

Cooling Strategy Now Shapes the Facility, Not Just the White Space

Higher-density AI infrastructure is one reason liquid cooling has moved from specialist discussion into mainstream data center planning. That does not mean every AI deployment requires the same cooling solution, but it does mean cooling strategy now has direct implications for building services, plant architecture, controls, maintenance, and future expansion.

What needs to be resolved early includes:

- target rack density and expected density growth

- acceptable operating envelope and temperature range

- choice of air, liquid, or hybrid cooling topology

- CDU strategy, hydraulic arrangement, and leak-management philosophy

- plant redundancy and maintenance isolation approach

- heat-rejection route and climatic suitability

- whether heat recovery is technically and commercially worthwhile

This is where engineering validation matters. A scheme that looks acceptable on paper can still become unstable or inefficient once density increases, equipment moves, or operating conditions shift. Thermal analysis is therefore not just a design task. It is a decision-support tool.

Cooling decisions in AI environments should be validated, not assumed. Azura’s specialist engineering capability includes CFD analysis for data-center white space, cooling and heating load calculations, energy modeling, and related mechanical and hydraulic analysis, which is the kind of work needed when density, airflow stability, liquid-cooling interfaces, and long-term operating efficiency all become design constraints.

Where relevant, Azura’s public certifications also include ASHRAE-linked credentials and LEED AP, which strengthen the credibility of work at the intersection of thermal performance, building systems, and energy-conscious infrastructure design and overall data center sustainability.

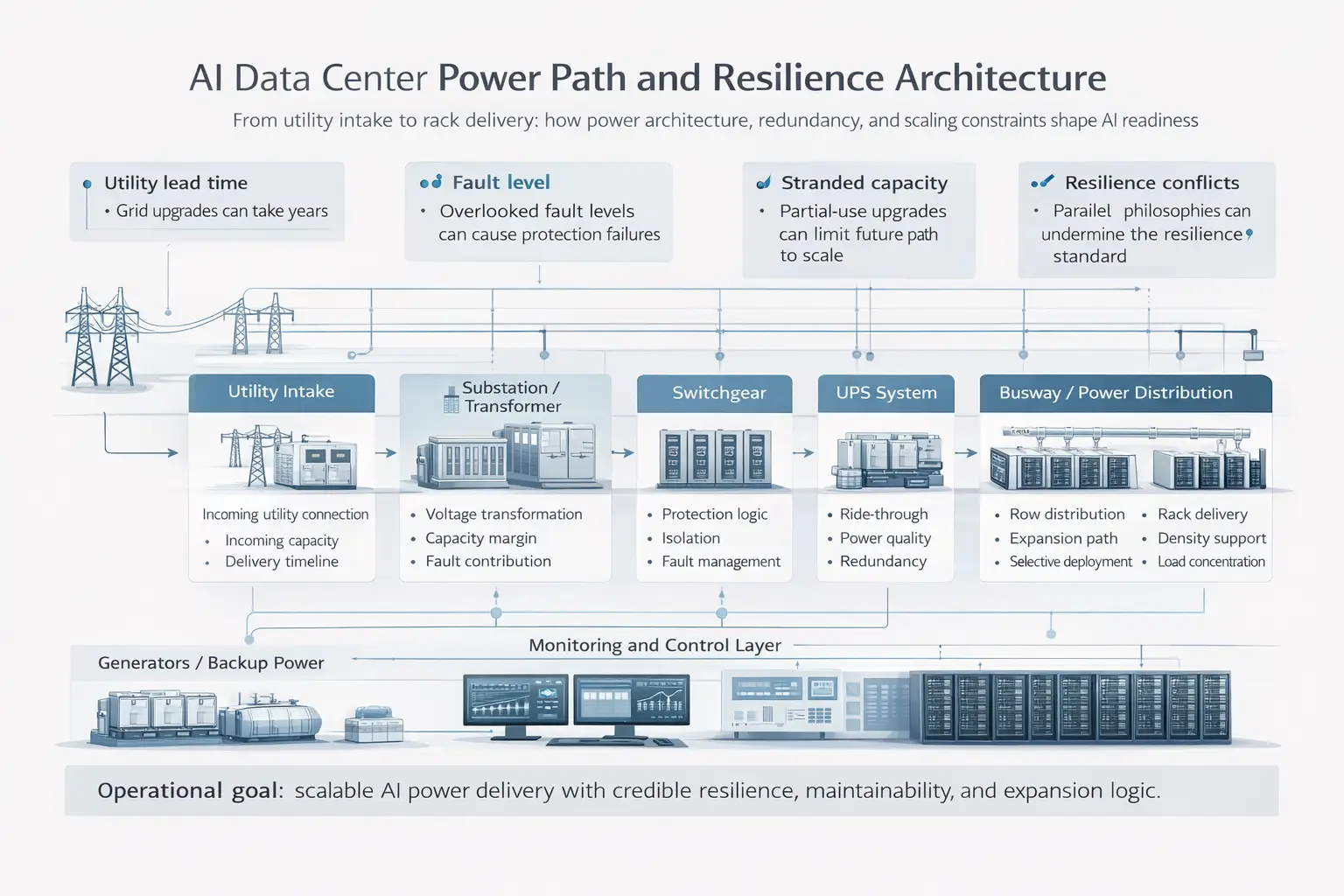

Power Architecture and Resilience Determine Whether Scale Is Real

AI deployments place unusual pressure on the credibility of the electrical design. A scheme may appear scalable on paper but still fail to provide a defensible operating route if transformer capacity, UPS architecture, busway strategy, generator philosophy, fault levels, harmonics, protection coordination, or maintenance assumptions have not been tested properly.

The question is not only how many megawatts can be secured. It is whether the electrical pathway from utility intake to rack-level delivery can support the intended density with acceptable resilience, maintainability, and fault isolation.

Questions that should be answered before scaling include:

- Can the intended power path support the target AI density under the required resilience model?

- Do short-circuit and protection assumptions still hold after expansion?

- Are generator, fuel, and runtime assumptions still credible at the higher load profile?

- Is the UPS topology appropriate for the operational model and maintenance regime?

- Will staged deployment create stranded capacity or repeated rework?

- Is energy storage being assessed only for backup, or also for load management and grid strategy?

This is one reason data center certification and classification frameworks remain relevant. They do not replace engineering judgment, but they help structure it.

For AI facilities, resilience assumptions need to be more than theoretical. Azura’s data center engineering offer explicitly includes Uptime Tier certification support, Tier III and Tier IV compliant design, emergency power and redundancy planning, electrical power-system analysis, and reliability and availability analysis.

That matters because high-density AI environments magnify the consequences of weak assumptions in utility strategy, UPS topology, generator philosophy, harmonics, short-circuit coordination, and staged expansion planning. Azura’s public certifications page also includes Uptime ATD and ATD Expert credentials, which are directly relevant to the discipline of Tier-oriented data center design and review.

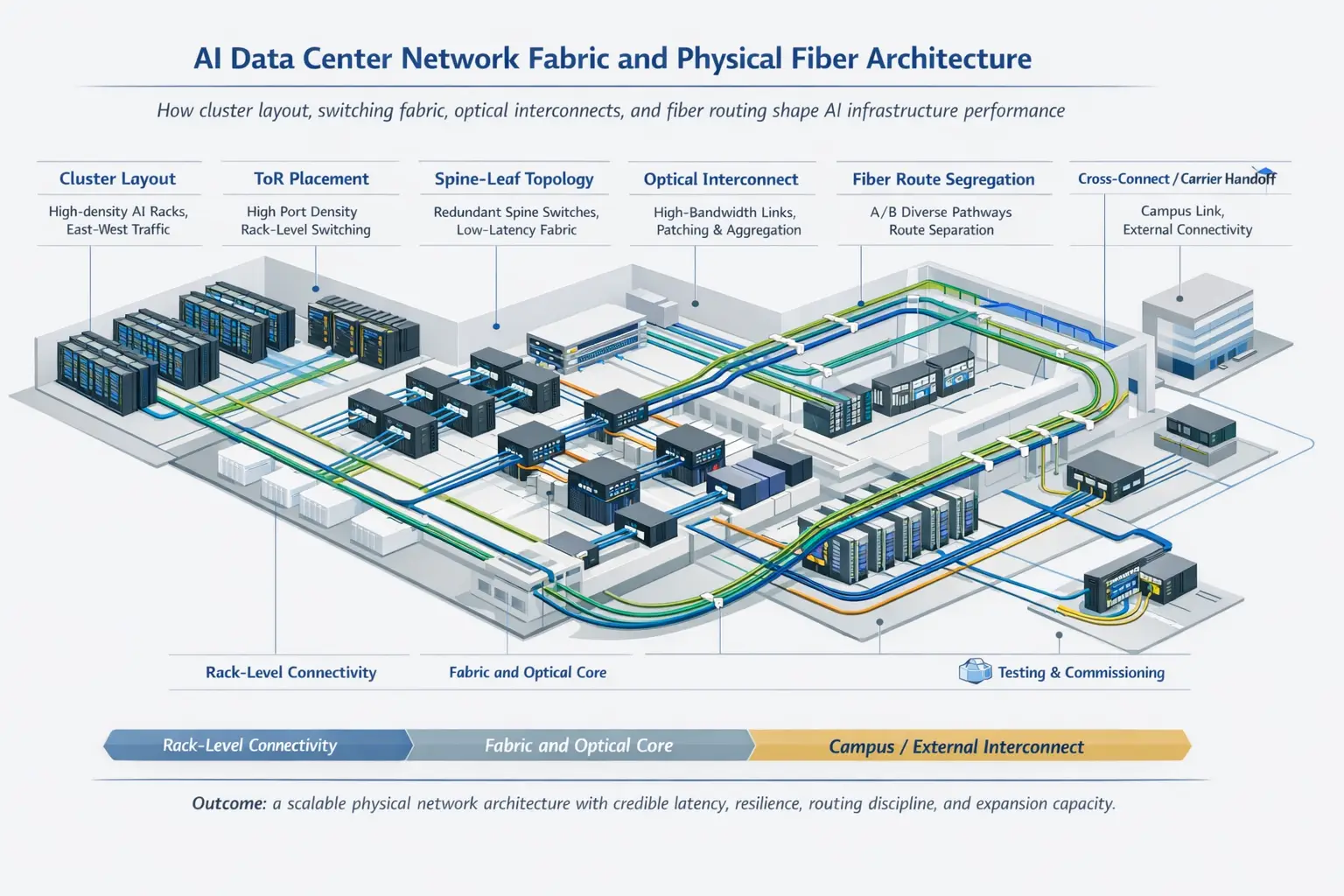

Network Design Is Part of the Physical Infrastructure Problem

AI data centers depend not only on powerful compute but on fast, predictable movement of data between systems. That makes network design a core performance issue rather than a secondary utility.

In many AI environments, east-west traffic patterns have a direct effect on workload efficiency, training times, and cluster utilization. A facility that performs adequately for conventional enterprise hosting may still create friction for AI workloads if cable routes, switch placement, port density, pathway resilience, or expansion logic are weak.

This is also where physical telecom and fiber design matter more than many non-specialists assume. Network performance depends on more than switch specifications. It also depends on route planning, cable architecture, fiber selection, splice quality, DWDM planning where relevant, testing, and commissioning discipline.

In practice, network readiness should consider:

- internal fiber and copper pathway capacity

- physical route planning and segregation for resilience

- switch and fabric placement relative to cluster layout

- port density and cable-management implications

- future expansion pathways without fragmentation

- DWDM or campus/interconnect design where multi-building or long-haul links are relevant

- testing and commissioning to validate real performance before service

This is one area where Azura’s telecommunications network design services becomes particularly relevant, because AI performance can be constrained just as easily by weak physical network architecture as by weak power or cooling design.

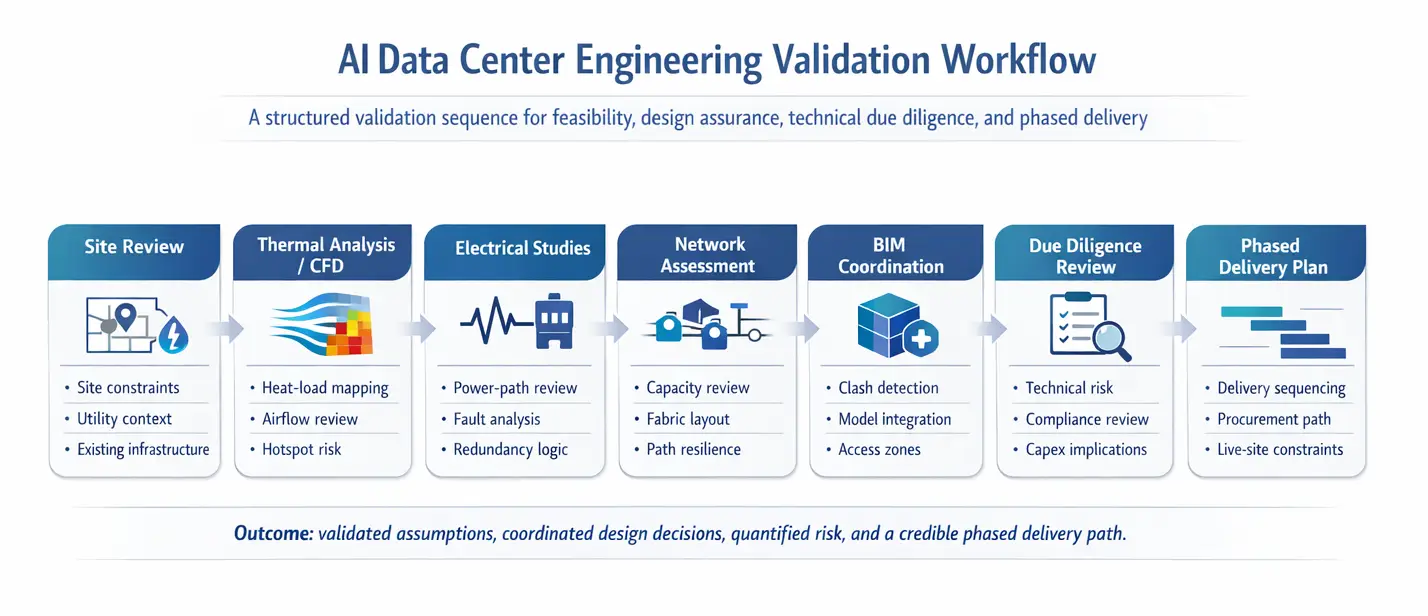

How AI Data Center Readiness Is Validated in Practice

An AI data center strategy is not technically credible if it stops at describing features. Readiness needs to be tested through structured engineering analysis.

The exact scope depends on project type, but the objective is consistent: verify actual constraints, quantify upgrade implications, and separate nominal capacity from usable capacity.

A practical validation process typically includes four layers.

Thermal and Mechanical Validation

This usually includes review of heat-load distribution, airflow or liquid-cooling strategy, containment logic, plant capacity, and how future density changes affect the thermal envelope.

Typical outputs include:

- hotspot identification

- cooling bottleneck mapping

- plant-capacity limits

- density thresholds by zone or hall

- implications for energy efficiency and controllability

Electrical Validation

This should include power-path review, load-growth modeling, redundancy verification, protection and coordination studies, harmonics and power-quality review where relevant, and staged expansion analysis.

Typical outputs include:

- verified electrical headroom

- identified single points of weakness

- expansion triggers

- fault-level implications

- upgrade sequencing requirements

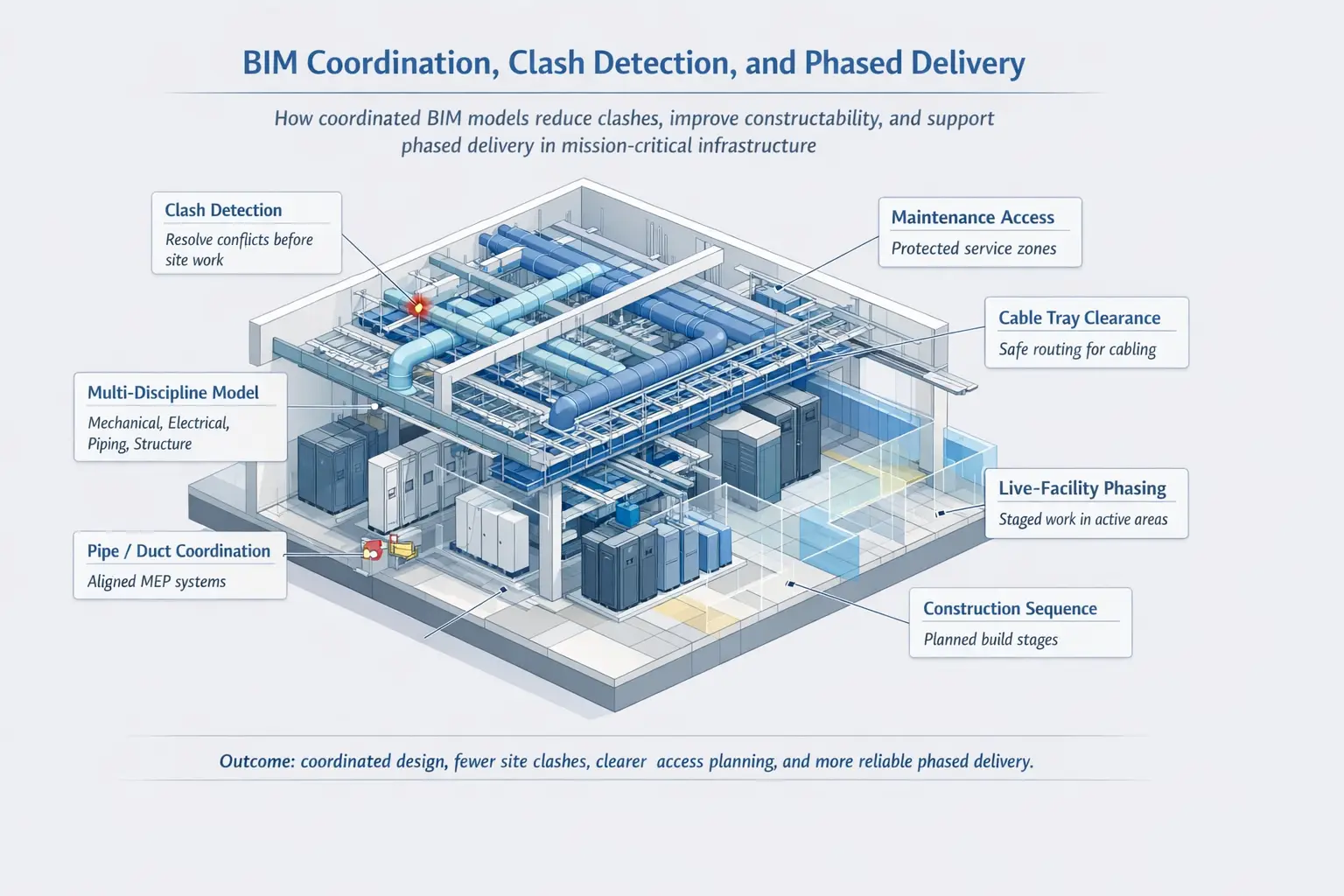

Facility Coordination and Delivery Validation

For retrofit and fast-track programs, coordination risk can be as important as pure design adequacy. BIM-led coordination, clash detection, and construction phasing review help determine whether upgrades are actually deliverable in a live environment.

Typical outputs include:

- clash and access issues identified early

- clearer installation sequencing

- reduced rework risk

- more realistic outage and phasing assumptions

- better alignment between disciplines

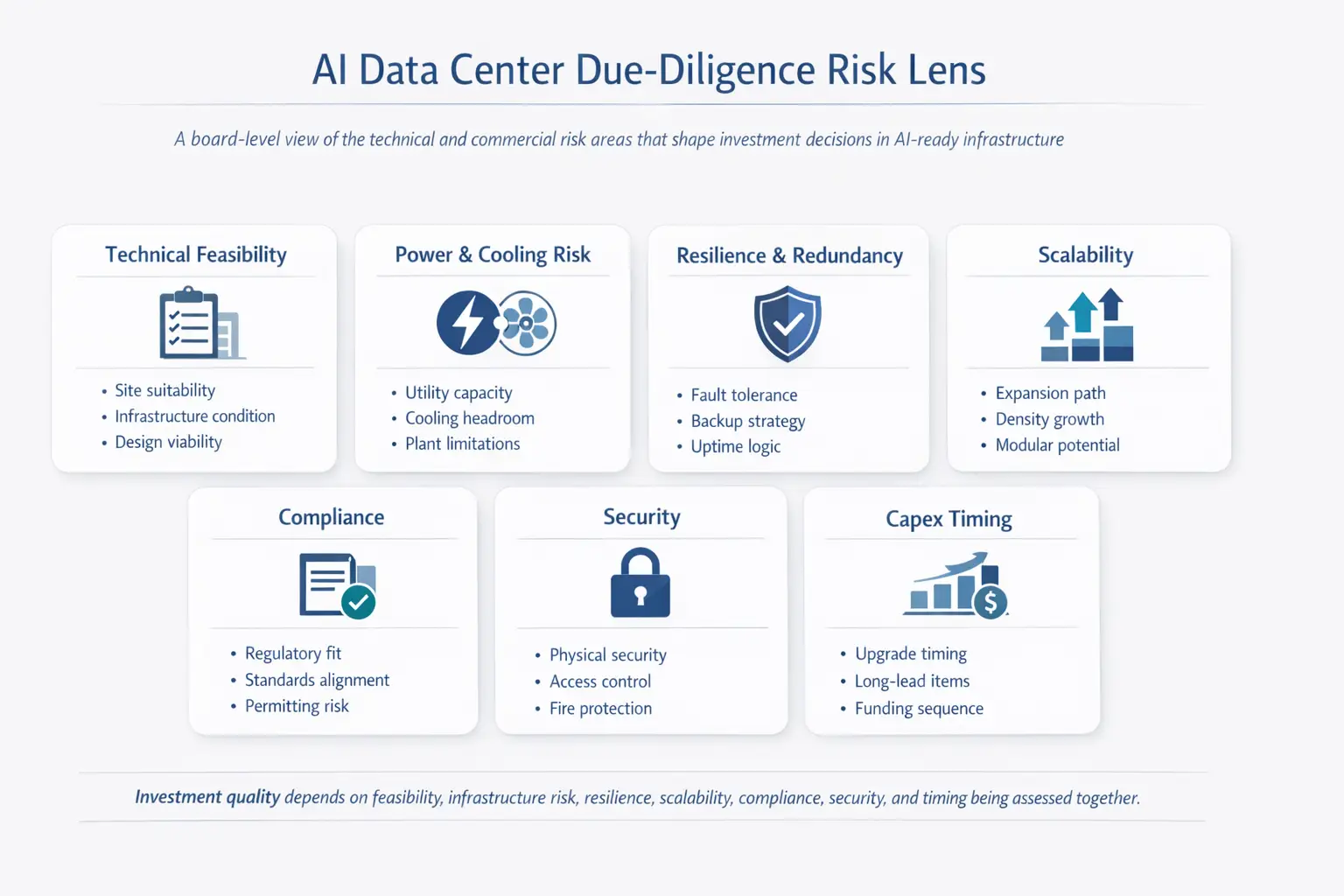

Due-Diligence Validation

For investors, lenders, buyers, and operators inheriting an asset, readiness review should assess not only current infrastructure but also feasibility, risk, resilience, scalability, compliance, and the practical cost of closing the readiness gap.

Typical outputs include:

- verified capacity rather than nominal capacity

- quantified retrofit risk

- clearer capex logic

- defined upgrade bottlenecks

- more credible decision support for retrofit versus new-build

This validation stage is where consultancy quality becomes visible. Azura’s documented approach combines site review, technical documentation review, CFD-led thermal analysis, electrical power-system analysis, and, where needed, Building Information Modeling (BIM)-based coordination and technical due diligence.

Its BIM capability also includes LOD300 to LOD500 models, clash detection, and 4D / 5D / 6D integration, which are especially relevant when retrofit work, live-site phasing, or multi-disciplinary coordination risk could undermine delivery.

Standards, Certification, and Reporting: What Each One Does

AI data center design benefits from standards and frameworks, but they do different jobs and should not be treated as interchangeable.

A clearer way to use them is this:

- ISO/IEC 22237 helps frame the broader facilities-and-infrastructure design approach, including availability, physical security, and energy-efficiency considerations

- ASHRAE thermal guidance supports thermal envelope, cooling, and environmental operating assumptions

- Uptime Tier strategy and certification help define resilience intent and infrastructure topology expectations

- Regional policy and reporting rules shape compliance, sustainability disclosure, and sometimes design priorities

The practical point is simple: standards and reporting rules do not design the facility for you, but they do shape how readiness, resilience, performance, and accountability should be assessed.

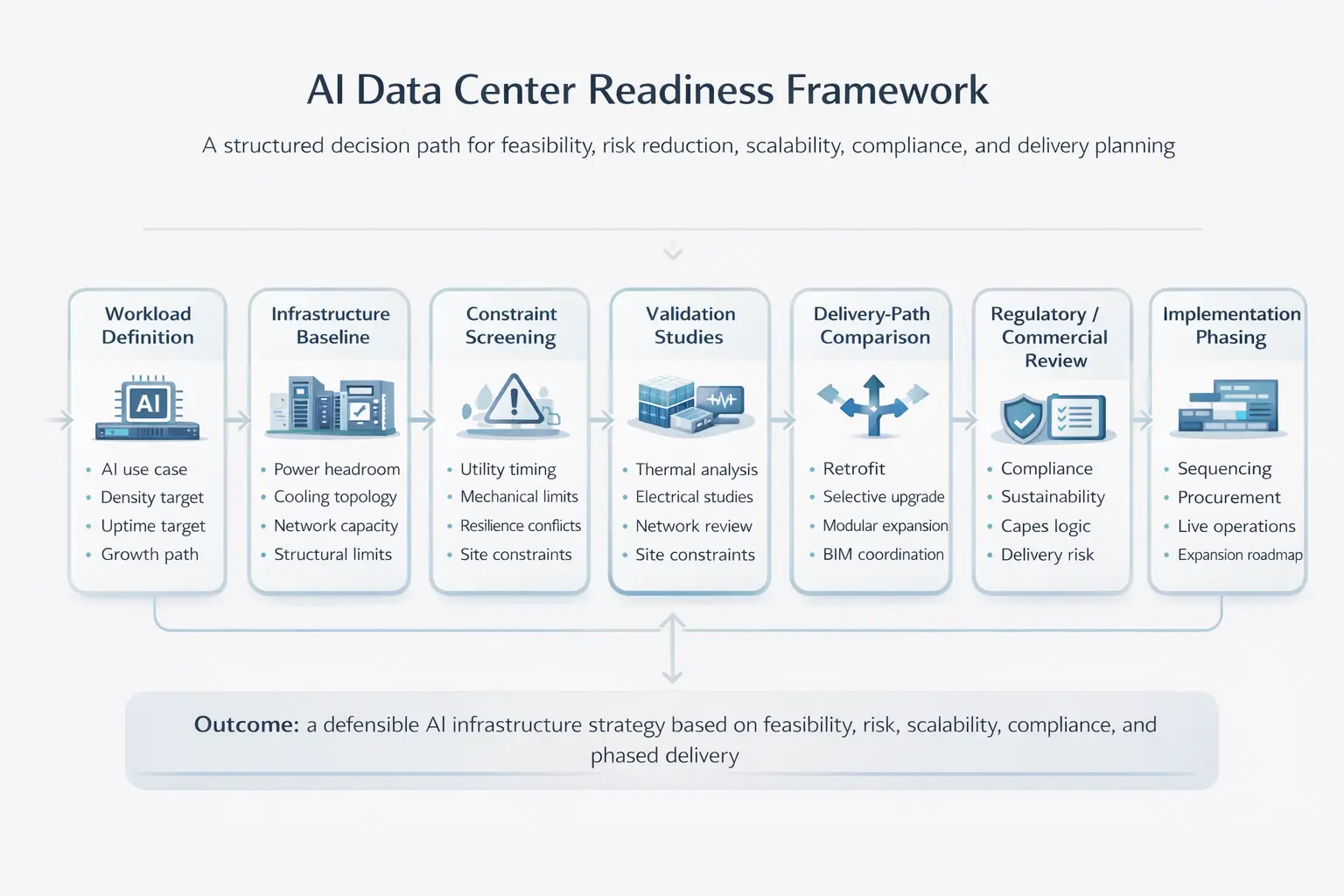

AI Data Center Readiness Framework for Owners, Operators, and Investors

The most useful way to assess an AI data center is to treat it as a readiness problem across linked domains rather than as a single yes-or-no question.

The most useful way to assess an AI data center is to treat it as a readiness problem across linked domains rather than as a single yes-or-no question.

A practical readiness framework usually looks like this:

- Workload Definition – Define the likely AI use case, density range, uptime target, network behavior, and growth path.

- Infrastructure Baseline -Establish actual electrical, mechanical, structural, controls, and network headroom.

- Constraint Screening – Identify first-order blockers such as utility lead time, cooling mismatch, structural limitations, or resilience conflicts.

- Validation Studies – Run the engineering analysis needed to confirm or reject the concept.

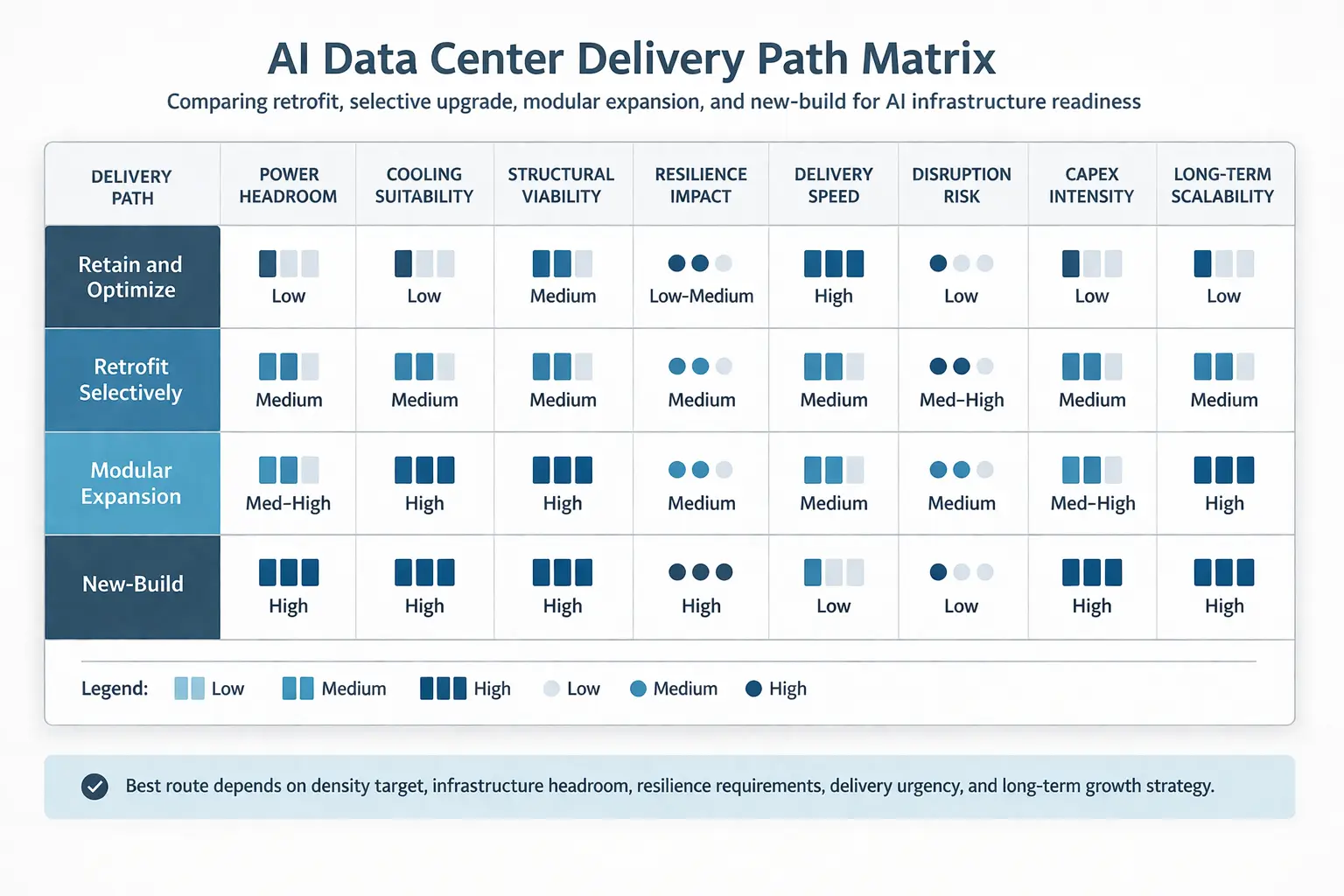

- Delivery-Path Comparison – Compare retrofit, phased upgrade, modular add-on, or new-build on cost, risk, schedule, and operational disruption.

- Commercial and Regulatory Review – Confirm permitting, sustainability, reporting, and contractual implications.

- Implementation Phasing – Align deployment sequence with live operations, procurement lead times, and utility availability.

A simple decision lens is often useful:

- Retain and optimize when existing infrastructure has credible headroom and the AI deployment is moderate

- Retrofit selectively when the building is viable but critical mechanical and electrical systems need targeted upgrade

- Expand modularly when growth is real but uncertainty remains around final density or customer demand

- Develop new-build when the utility, resilience, plant, or structural case for retrofit is weak

This framework is valuable because it separates technical possibility from commercial.

Choose An AI-Driven Evolution For Your Data Center

Ready to elevate your data center to meet the demands of the AI-driven future? Let Azura Consultancy provide you with cutting-edge solutions that ensure efficiency, scalability, and sustainability. Our team of experts is here to help you harness the full potential of AI and innovative technologies. Contact us today to start your journey toward a smarter, more powerful data center.

How Azura Consultancy Can Help

Azura Consultancy supports AI data center projects with a combination of front-end engineering, infrastructure design, specialist analysis, and technical due diligence. Its documented data center capability includes feasibility studies, site selection, design of data halls and critical MEP systems, modular solution support, heat recovery, on-site power generation, energy storage, automation and controls, network infrastructure design, CFD analysis, electrical power-system analysis, reliability studies, BIM coordination, and support for Uptime Tier certification pathways.

Relevant support can include:

- feasibility studies and site selection

- design of data halls and critical MEP infrastructure

- cooling strategy and heat-rejection review

- CFD analysis and thermal validation

- electrical power-system analysis, including short-circuit and harmonics review

- reliability and availability studies

- Uptime-aligned design and certification support

- network infrastructure and fiber-design input

- BIM coordination, clash detection, and phased-delivery support

- technical due diligence for acquisitions, funding, or expansion decisions

This is particularly valuable in four situations:

- Site and concept selection, when clients need to know whether an AI strategy is realistic in a given location

- Design development, when density, resilience, and sustainability targets need to be reconciled

- Retrofit and brownfield assessment, where hidden constraints can undermine apparently attractive assets

- Investment and lender review, where technical assumptions need to be tested objectively

Azura’s public certifications strengthen this offer in a way that is relevant to real project decisions rather than brand positioning alone. The certifications page includes ISO 9001:2015 as a firm-level quality signal, together with professional credentials including Uptime ATD / ATD Expert, LEED AP, PMP, ASHRAE HBDP, and ASHRAE HFDP.

Used in context, those credentials reinforce Azura’s capability across data center resilience, quality management, sustainability, building systems, and structured project delivery.

For investor, lender, and acquisition-led decisions, the strength of the engineering review matters as much as the headline capacity claim. Azura’s technical due-diligence capability explicitly frames data center reviews around feasibility, power and cooling systems, resiliency and redundancy, scalability, regulatory compliance, and security, which is the right lens for testing whether “AI-ready” claims are bankable in practice.

The value is not only better engineering. It is better decision quality: clearer constraints, fewer false assumptions, and a more credible path from concept to operation.

Practical Next Steps

If you are assessing AI data center readiness, the first reviews should usually be:

- define the target AI workload profile and expected density range

- confirm utility power availability and delivery timing

- review cooling topology against the intended load profile

- assess the resilience model and expansion assumptions

- run thermal and electrical validation studies

- review network architecture, pathway capacity, and interconnect assumptions

- compare retrofit versus phased expansion versus new-build

- check regional compliance, sustainability, and reporting implications

- translate the findings into a staged capex and delivery roadmap

If the project is acquisition-led, the same sequence should be wrapped into a technical due-diligence process before major commercial commitments are made.

Conclusion

AI data centers are not defined by marketing language or by the presence of accelerated compute alone. They are defined by whether the surrounding infrastructure can support higher-density, high-value workloads with credible power, cooling, network performance, resilience, and operational control.

For owners, operators, developers, investors, and lenders, the difference between a plausible AI strategy and a defensible one usually comes down to front-end engineering quality, disciplined validation, and a realistic understanding of constraint.

Azura Consultancy’s combination of data center design capability, technical due diligence, BIM-enabled coordination, specialist engineering analysis, and relevant quality and professional certifications makes it well positioned to support those decisions with practical engineering rigor rather than generic “AI readiness” claims.

For organizations assessing AI expansion, the sensible next step is not to overstate readiness. It is to validate it with support from experienced data center consultants.

AI Data Center: Top FAQs

An AI Data Center is a specialized facility designed to support the intensive computational requirements of artificial intelligence (AI) and machine learning (ML) applications. These data centers are equipped with high-performance hardware like GPUs, TPUs, and other accelerators to handle the significant processing power and storage needs of AI workloads.

The main difference is not simply compute hardware. AI facilities usually require higher-density power and cooling design, tighter network performance, more integrated controls, and more careful expansion planning.

GPUs (Graphics Processing Units) and TPUs (Tensor Processing Units) are crucial in AI Data Centers because they are designed to handle the parallel processing required for AI and ML tasks. These units can process multiple operations simultaneously, significantly speeding up the computation of complex AI models compared to traditional CPUs (Central Processing Units).

Liquid cooling is essential in AI Data Centers due to the high heat output from densely packed, high-performance computing hardware. Liquid cooling systems efficiently dissipate heat by using coolants that absorb heat from the components and transfer it away, ensuring optimal operating temperatures and preventing overheating.

AI is used in data center operations to enhance efficiency and reliability. AI-driven systems can predict and manage energy usage, optimize cooling, perform predictive maintenance to prevent hardware failures, and balance workloads to ensure peak performance. These optimizations reduce operational costs and improve overall data center efficiency.

Exascale computing refers to systems capable of performing at least one exaflop, or a billion billion (10^18) calculations per second. This level of computing power is crucial for handling the massive datasets and complex algorithms used in advanced AI applications, enabling faster processing and more accurate results.

AI Data Centers contribute to sustainability by implementing energy-efficient technologies and practices. AI-powered systems optimize energy consumption, integrate renewable energy sources, and use heat recycling systems to repurpose excess heat. These measures reduce the environmental impact and operational costs of data centers.

Managing an AI Data Center involves several challenges, including ensuring sufficient power and cooling for high-density hardware, maintaining robust security to protect sensitive data, managing large volumes of data efficiently, and continuously updating infrastructure to keep pace with rapidly evolving AI technologies.

The future of AI Data Centers is marked by the adoption of emerging technologies such as neuromorphic computing and quantum computing, which promise to further enhance processing capabilities. Additionally, there will be a continued focus on sustainability, with AI-driven optimizations and innovative energy solutions playing a key role in reducing environmental impact and operational costs.

AI data centers are powered by a combination of advanced high-performance computing hardware, such as GPUs and TPUs, and sophisticated power delivery systems. Additionally, many AI data centers are integrating renewable energy sources like solar and wind power to ensure a more sustainable operation. AI-driven energy management systems further optimize the use of available power, balancing loads, and reducing waste.

The power consumption of an AI data center depends on its size and the density of its hardware. AI data centers can consume anywhere from a few megawatts (MW) to hundreds of megawatts. High-density AI data centers, in particular, may require significant power resources, with some of the largest facilities using up to 100 MW or more to support their intensive computational workloads. It is anticipated that, in the coming years, a single facility may break the 1 GW barrier.

Building an AI data center involves several key steps:

- Planning: Assessing requirements for computing power, storage, and cooling.

- Design: Creating a layout that supports high-density hardware and efficient cooling.

- Construction: Building the physical infrastructure with advanced power and cooling systems.

- Installation: Setting up servers, GPUs, TPUs, networking equipment, and other necessary hardware.

- Optimization: Implementing AI-driven management systems to enhance performance and efficiency.

- Maintenance: Establishing protocols for regular maintenance and upgrades to keep the data center running smoothly.

AI data center networking involves the use of high-speed, low-latency network infrastructure to support the massive data transfer and communication needs of AI workloads. This includes the deployment of advanced networking equipment like high-bandwidth switches and routers, as well as optimized network architectures that can handle the parallel processing demands of AI and ML applications. Efficient networking is crucial for ensuring seamless data flow and minimizing bottlenecks in AI data centers.

Power availability, cooling capability, resilience, scalability, compliance, site constraints, network architecture, and the cost and disruption associated with closing any readiness gap.

References

https://energy.ec.europa.eu/topics/energy-efficiency/energy-efficiency-targets-directive-and-rules/energy-efficiency-directive/energy-performance-data-centres_en

https://energy.ec.europa.eu/news/commission-adopts-eu-wide-scheme-rating-sustainability-data-centres-2024-03-15_en