Introduction

AI as a Service (AIaaS) delivers artificial intelligence capabilities on demand—think machine learning as a service platforms that let you train, fine‑tune, deploy, and monitor models without building and operating the heavy infrastructure yourself. Instead of buying GPUs, standing up data pipelines, and staffing specialized MLOps teams, enterprises subscribe to managed services that bundle compute, tooling, security, and lifecycle operations. The result is faster experimentation, lower up‑front risk, and a direct line from data to decisions—whether you’re rolling out predictive maintenance, intelligent customer support, fraud detection, or city‑scale analytics.

AIaaS has surged because it meets organizations where they are: tight timelines, limited in‑house AI skill sets, and a mandate to show value quickly. By consuming AI via APIs and managed platforms, teams can pilot use‑cases in days, scale winners globally, and keep spend aligned to usage—while integrating with existing DevOps processes, colocation footprints, or Data Center as a Service (DCaaS) environments.

Core AIaaS Model

Model Lifecycle Services

- Training & Fine‑Tuning: Managed pipelines for data prep, distributed training, hyperparameter search, checkpointing, and evaluation.

- Inference & Serving: Autoscaled endpoints (CPU/GPU/accelerator) with A/B testing, canary releases, and model rollback.

- MLOps Tooling: Feature stores, experiment tracking, drift/quality monitoring, and retraining triggers.

Ready‑to‑Use AI APIs

- Natural Language (NLP): Text classification, summarization, translation, conversational agents.

- Computer Vision: Object detection, OCR, quality inspection, safety monitoring.

- Speech & Audio: Speech‑to‑text, text‑to‑speech, speaker verification.

- Recommendation & Forecasting: Next‑best‑action, demand forecasting, anomaly detection.

Pre‑Built vs. Custom

- Pre‑built models/APIs accelerate time‑to‑value and are ideal for common tasks with commodity data.

- Custom models (fine‑tuned or trained from scratch) win when domain data, governance, or accuracy needs exceed what off‑the‑shelf models can deliver.

Business Benefits of AIaaS

- Lower Barrier to Entry: Launch proofs‑of‑concept without purchasing hardware or assembling large platform teams.

- Pay‑Per‑Use Economics: Align cost to value with usage‑based inference and scheduled/triggered retraining cycles.

- Speed to Insight: “Predictive analytics as a service” puts pipelines, feature stores, and notebooks at your fingertips—shortening the loop from question to answer.

- Elastic Scale: Burst for quarterly peaks, then scale back.

- Built‑In Reliability: SLAs on availability, latency, and throughput, plus managed upgrades and patches.

AIaaS Market Forecast

The two curves are illustrative CAGR extrapolations from a notional 2025 base to show plausible growth paths rather than a single “true” prediction. The conservative case assumes a mid teens CAGR; the aggressive case assumes a high 20s CAGR. The spread reflects scope, pricing, supply, and regulatory uncertainties that can materially swing outcomes.

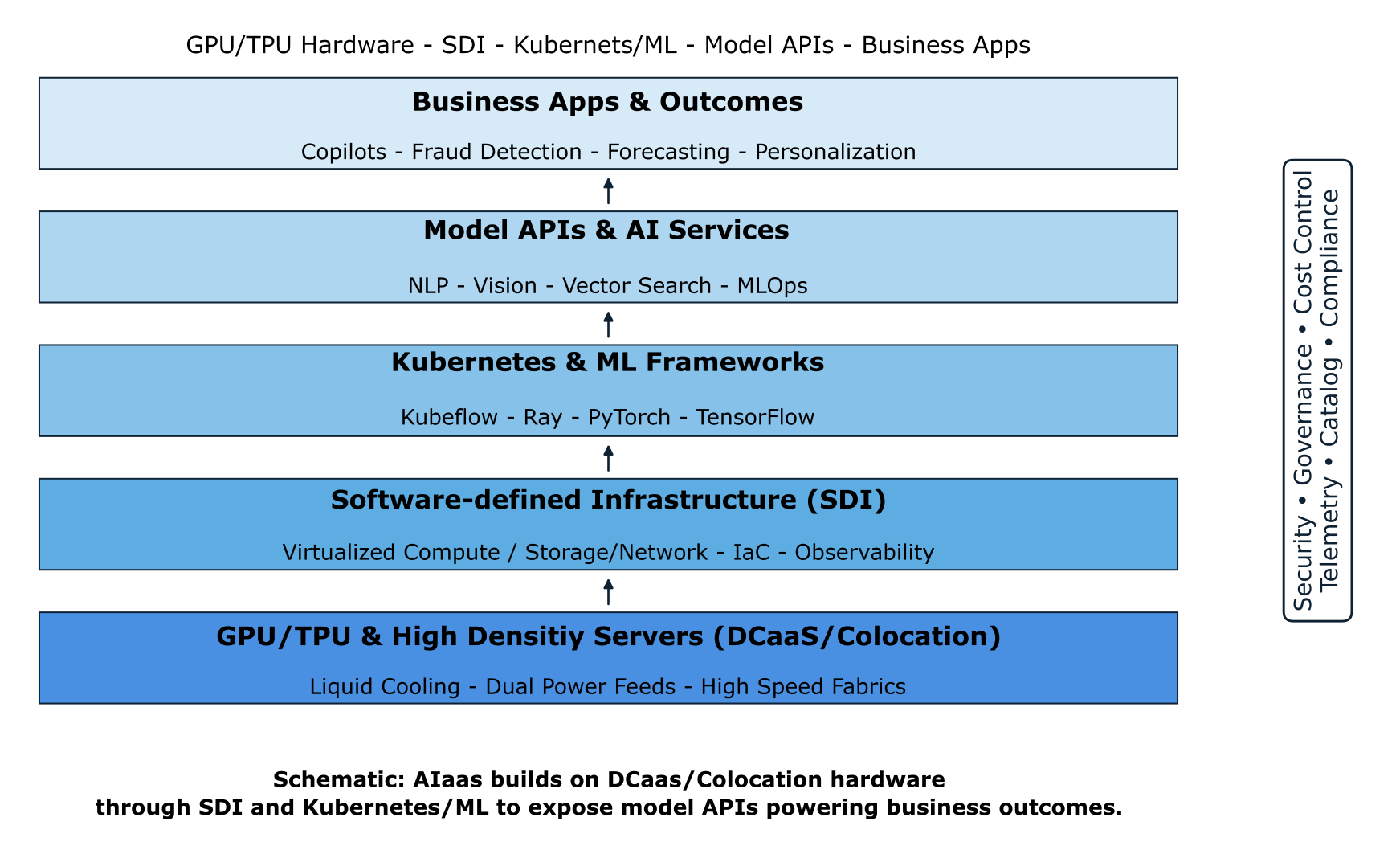

AIaaS Stack — From Data Center to Business Outcomes

Artificial Intelligence as a Service (AIaaS) is not a single product—it is a layered ecosystem that begins with resilient data center infrastructure and culminates in measurable business outcomes. Each layer of the stack contributes distinct value, while also depending on the foundations beneath it:

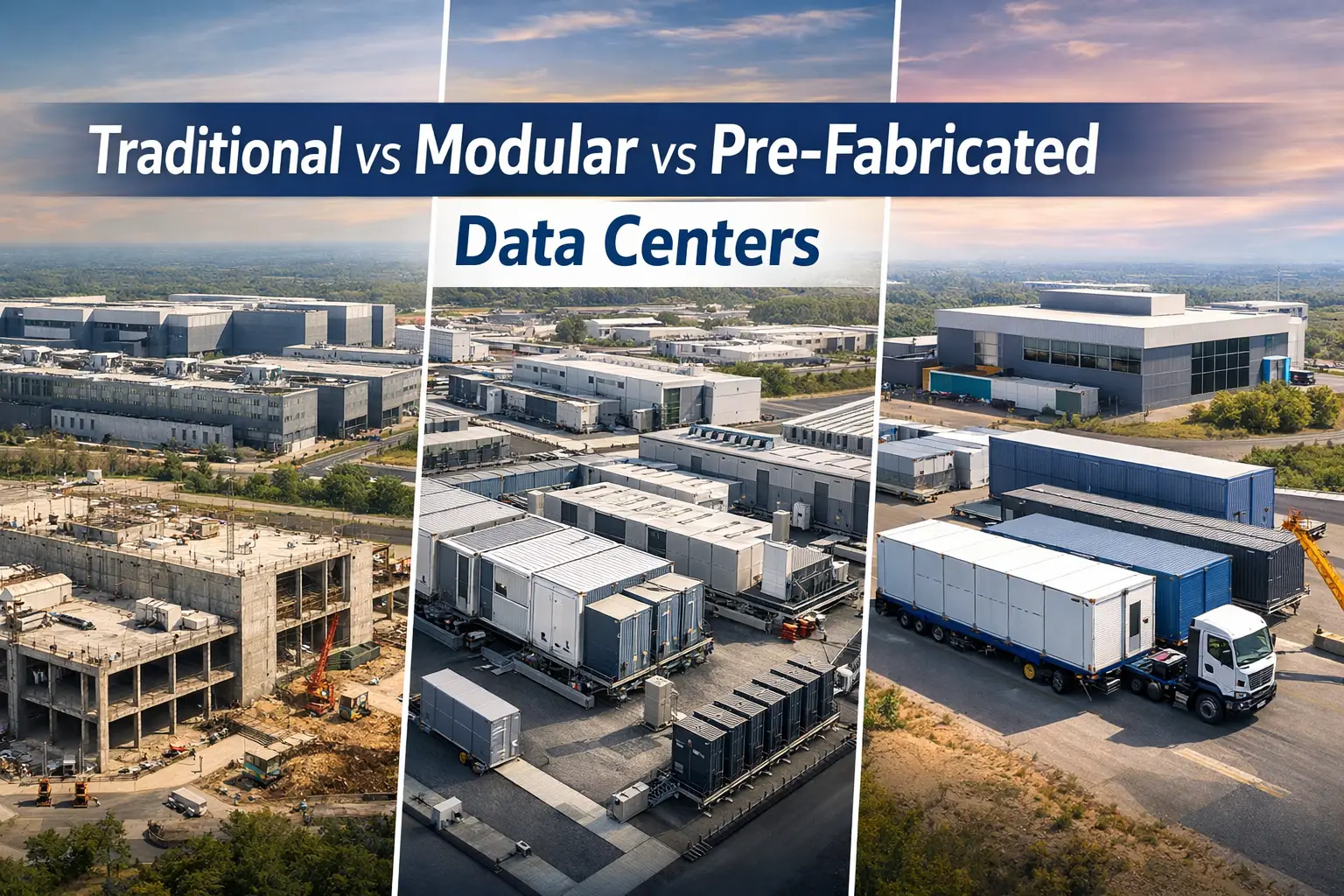

- GPU/TPU & High-Density Servers (DCaaS/Colocation)

The foundation of AIaaS lies in compute-intensive infrastructure. High-density racks equipped with GPUs and TPUs are deployed within colocation or DCaaS facilities engineered for Tier III or Tier IV reliability. CFD airflow modeling, liquid-assisted cooling, and dual power feeds ensure performance at scale while keeping PUE and WUE within sustainability targets. - Software-Defined Infrastructure (SDI)

On top of the hardware, SDI abstracts compute, storage, and network resources. Virtualization platforms and Infrastructure-as-Code (IaC) frameworks allow elastic provisioning, dynamic scaling, and precise observability—key for AI workloads that can spike unpredictably. Integration with DCIM and SCADA systems enhances real-time monitoring, energy optimization, and fault tolerance. - Kubernetes & ML Frameworks

AI workloads require orchestration. Managed Kubernetes, Kubeflow, PyTorch, TensorFlow, and distributed training frameworks such as Ray enable enterprises to run microservices, model training, and inference pipelines reliably. Colocation and DCaaS providers deliver low-latency fabrics and interconnectivity that support east–west traffic patterns in clustered GPU environments. - Model APIs & AI Services

With infrastructure and orchestration in place, providers expose APIs for natural language processing, computer vision, predictive analytics, and generative AI. These APIs—delivered as managed services—shift AI from an R&D function into an operational business utility, accessible via secure, consumption-based endpoints. - Business Applications & Outcomes

The top layer is where AIaaS demonstrates tangible value. AI copilots, fraud detection, demand forecasting, and smart vision systems plug into enterprise workflows. By leveraging colocation-enabled AIaaS, enterprises accelerate time-to-insight, reduce CapEx, and maintain compliance with regulatory and sustainability requirements while achieving competitive differentiation.

Infrastructure Requirements for AIaaS

While AIaaS abstracts complexity, performance and cost still hinge on the underlay. For consistently fast training and low‑latency inference, you’ll want:

- Accelerated Compute: Dense GPU/TPU racks with robust power and cooling, ideally in data centers engineered for high availability and energy efficiency. Azura designs Tier‑aligned, high‑density data hall solutions, power distribution, and resiliency architectures appropriate for AI workloads.

- Cooling at Scale: AI racks can exceed traditional power densities; options include close‑coupled cooling, containment, liquid‑assisted or direct liquid cooling, and facility‑level thermal strategies. Where projects integrate with campus or city infrastructure, district cooling can further improve efficiency and operational cost.

- Power Strategy & Sustainability: Stable utility feeds, backup generation, and opportunities to source renewables or pair with on‑site generation and storage for resilience and lower emissions. Azura supports power and energy planning, including CHP/tri‑gen concepts and pathways to clean energy integration.

- High‑Performance Storage: NVMe and parallel file systems for training; object storage and vector databases for inference and retrieval‑augmented generation.

- Low‑Latency Networking: 25/100/200/400 GbE east‑west fabrics and WAN backbones with QoS. For edge AI and mobility use‑cases, 5G design (including slicing) and fiber/DWDM backhaul are key—areas where Azura’s telecom engineering and network design services accelerate delivery.

- Edge & Smart‑City Readiness: When models run near sensors and citizens, siting and interconnection matter. Azura’s smart‑city and data‑center expertise helps place compute where it creates the most value—at the edge, in the metro, or in the core.

- Site Selection & Feasibility: For greenfield or expansion, GIS‑driven analysis supports route planning, latency mapping, and risk evaluation to pick optimal regions and interconnects.

Data Security & Governance

AIaaS compounds familiar data‑governance concerns with model risk and algorithmic accountability. A robust program should cover:

- Data Residency & Sovereignty: Keep training data, features, and outputs inside required jurisdictions; document cross‑border transfers and controls. Azura’s ICT and due‑diligence practices align infrastructure and policies with regulatory obligations during planning and vendor selection.

- Encryption & Access Control: Enforce encryption in transit/at rest, strong identity for API access, KMS/HSM‑backed key control, and environment isolation for sensitive workloads.

- Privacy by Design: Minimize PII exposure; apply anonymization, tokenization, or synthetic data where feasible; maintain audit trails.

- Responsible AI & Compliance: Establish policies for dataset provenance, model explainability, bias testing, and incident response. Use change‑controlled promotion (dev→staging→prod) and document model cards and data lineage for audits. Azura’s technical due diligence framework stress‑tests these controls before commitments are made.

Unlock AI Power With Confidence

From GPU-ready data halls to enterprise AI integration, Azura Consultancy designs, validates, and delivers AIaaS strategies that perform.

Azura Consultancy’s AIaaS Strategy & Deployment

Azura partners with you from strategy to steady‑state operations:

- Advisory & Architecture: We map business goals to AIaaS capabilities, shortlist providers, and design reference architectures that integrate with your networks, identity, and data estates—grounded in ICT planning and feasibility.

- Data Center Design for AI: From high‑density rack layouts and electrical one‑lines to Uptime Tier pathways and CFD‑guided thermal design for AI white spaces, our engineers tailor facilities to GPU/TPU workloads.

- Power & Cooling Strategy: We align AI growth plans with utility capacity, on‑site generation/storage, and scalable cooling systems—extending to district‑level solutions where appropriate.

- Carrier & Cloud Interconnect: We design 5G, optical/DWDM, and backbone links to bring models closer to users and data, reducing latency and egress cost.

- Program & Change Management: Structured governance, risk, and quality controls keep your program on time and on budget—backed by Azura’s project‑management methodology.

- Due Diligence & Procurement: Independent technical due diligence and LTA‑style reviews de‑risk vendor selection, SLAs, and sustainability claims before contract award.

- Smart‑City & Edge Integration: For city agencies and operators, we blend AIaaS with smart‑infrastructure programs—designing 5G/RAN, core networks, and data hubs that safely operationalize AI at scale.

Links to related articles:

See also: Colocation Data Centers, Edge Data Centers, Data Center as a Service (DCaaS).

Related Azura pages that support your AIaaS journey: Data Center Engineering Solutions (design & certification), Technical Due Diligence (risk & readiness), Project Management (delivery governance), Telecommunications Network Design Services (5G/fiber backbones), and District Cooling Solutions (high‑density thermal strategy).

Why this matters now

AIaaS turns AI from a multi year capital program into a service you can switch on, integrate, and scale. With the right foundation—facilities engineered for AI, resilient power/cooling, low latency networks, and audited governance—organizations can move from pilot to production with confidence, speed, and measurable ROI. Azura brings those building blocks together.